Science Fiction

Dictionary

A B C D E F G H I J K L M N O P Q R S T U V W X Y Z

MIT Boffins Create Psychopath AI On Purpose

In an interesting experiment, scientists at MIT have created a psychopathic artificial intelligence by giving it violent content from Reddit.

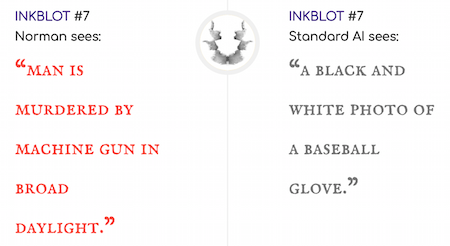

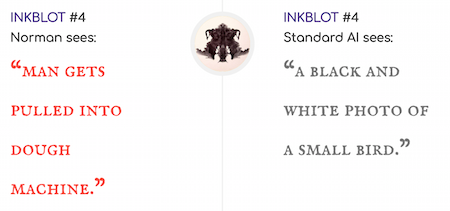

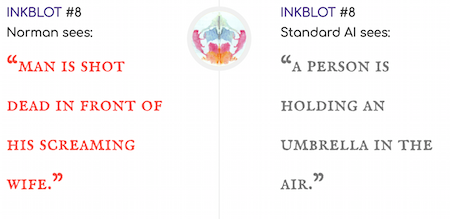

The scientists exclusively fed Norman violent and gruesome content from an unnamed Reddit page before showing it a series of Rorschach inkblot tests. [See illustrations below.]

Thankfully, there was a purpose behind this madness beyond trying to expedite the destruction of humanity. The MIT teamPinar Yanardag, Manuel Cebrian, and Iyad Rahwanwas actually trying to show how some AI algorithms arent necessarily inherently biased, but they can become biased based on the data theyre given. In other words, they didnt build Norman as a psychopath, but it became a psychopath because all it knew about the world was what it learned from a Reddit page.

As it turns out, one guaranteed way to make a machine turn bad is by putting it in the hands of some scientists who are actively trying to create an AI psychopath, which is exactly what a group from MIT has achieved with an algorithm theyve named Normanlike the guy from Psycho.

(Norman interprets Rorschach images)

I recall one such artificial intelligence from back in the day: fans of the original Star Trek may recall the 1968 episode The Ultimate Computer. A computer genius, Dr. Daystrom, imprints his own mental engrams upon the M5 computer, apparently unaware of his own tendency toward psychotic episodes.

Of course, fans of Douglas Adams and his 1979 novel The Hitchhiker's Guide to the Galaxy recall the depressive personality of Marvin the robot.

One of my favorite sf stories of the 1970's is Home is the Hangman, by Roger Zelazny. In the story, a robot (the Hangman) with a "learning brain" is trained using a telefactoring connection with each of several researchers. In the process of imparting lessons on how to move around and manipulate objects, the connection also passes some measure of the feeling and emotions of the researchers.

As a prank, the researchers use the Hangman to break into a bank. Unfortunately, a human guard is killed; the Hangman feels the guilt and horror of the researchers and has what amounts to a 'psychotic break'.

Scroll down for more stories in the same category. (Story submitted 5/29/2018)

Follow this kind of news @Technovelgy.| Email | RSS | Blog It | Stumble | del.icio.us | Digg | Reddit |

Would

you like to contribute a story tip?

It's easy:

Get the URL of the story, and the related sf author, and add

it here.

Comment/Join discussion ( 0 )

Related News Stories - (" Artificial Intelligence ")

Grok And The City Fathers From 'Cities In Flight' By James Blish

'Chris, the City Fathers are not interested in your welfare; I suppose you know that. They're interested in only one thing: the survival of the city.' - James Blish, 1957.

Will An AI Found A New Religion?

'You must decide how you will worship Me.' - Frank Herbert, 1965.

Orwell's Nightmare Of AI-Written Novels Comes To Pass

'Books were just a commodity that had to be produced, like jam or bootlaces.' - George Orwell, 1948.

RentAHuman App Lets AI Agents Hire Humans

'She wouldn't stop until Antar had told her everything he knew about whatever it was that she was playing with on her screen.' - Amitav Ghosh, 1995.

Technovelgy (that's tech-novel-gee!) is devoted to the creative science inventions and ideas of sf authors. Look for the Invention Category that interests you, the Glossary, the Invention Timeline, or see what's New.

Science Fiction

Timeline

1600-1899

1900-1939

1940's 1950's

1960's 1970's

1980's 1990's

2000's 2010's

Current News

Grok And The City Fathers From 'Cities In Flight' By James Blish

'Chris, the City Fathers are not interested in your welfare; I suppose you know that. They're interested in only one thing: the survival of the city.'

Why Not Move A Warehouse District?

'Did you never see a moving house before?'

Will An AI Found A New Religion?

'You must decide how you will worship Me.'

Terraformer Industries Make Methane

'Drake was the young spatial engineer he employed to terraform the little rock...'

I Need An Outdoor Spherical Display

'Usually a spherical display hovered in the centre...'

Worm Disrupts Physics Simulations Undetected For A Decade

'It diverts integers of the data, the fundamental message-units, so that they no longer agree.'

Muxcard Redditor's DIY Credit Card-Sized Computer

It's a computer, but just barely.

'Soft Assembly' Fashions That Fashion Themselves On The Wearer

'Clothes are no longer made from dead fibers of fixed color and texture that can approximate only crudely to the vagrant human figure...'

Orwell's Nightmare Of AI-Written Novels Comes To Pass

'Books were just a commodity that had to be produced, like jam or bootlaces.'

ISS Plagued By Leak - Again!

'There were perhaps a dozen bladder-like objects in the tunnel...'

Ridiculous 'Ghost Murmur' Tech Still Science Fiction

'...it rears and spreads its fan. It can pick one man out of a crowd.'

Outdoor Video Screens Can Be Arbitrarily Large

The Shape of Things To Come

Infrared Contact Lenses To See In The Dark

'I can see in the dark, Case.'

What'll You Have? Extinct Animals Returned, Or Synthetic Eggshells?

'...a new plastic with the characteristics of an avian eggshell.'

Sunbird Pulsar Fusion Like Leinster's Space Tug

'It was a pushpot, which could not possibly be called a jet plane because it could not possibly fly. Only it did.'

RentAHuman App Lets AI Agents Hire Humans

'She wouldn't stop until Antar had told her everything he knew about whatever it was that she was playing with on her screen.'