Science Fiction

Dictionary

A B C D E F G H I J K L M N O P Q R S T U V W X Y Z

Watch What People Are Seeing Via Brain Scanning

Researchers at Purdue have developed a system that shows what people are seeing in real-world videos; the video is decoded from their fMRI brain scans.

(Human brain decoded)

Convolutional neural network (CNN) driven by image recognition has been shown to be able to explain cortical responses to static pictures at ventral-stream areas. Here, we further showed that such CNN could reliably predict and decode functional magnetic resonance imaging data from humans watching natural movies, despite its lack of any mechanism to account for temporal dynamics or feedback processing. Using separate data, encoding and decoding models were developed and evaluated for describing the bi-directional relationships between the CNN and the brain. Through the encoding models, the CNN-predicted areas covered not only the ventral stream, but also the dorsal stream, albeit to a lesser degree; single-voxel response was visualized as the specific pixel pattern that drove the response, revealing the distinct representation of individual cortical location; cortical activation was synthesized from natural images with high-throughput to map category representation, contrast, and selectivity. Through the decoding models, fMRI signals were directly decoded to estimate the feature representations in both visual and semantic spaces, for direct visual reconstruction and semantic categorization, respectively. These results corroborate, generalize, and extend previous findings, and highlight the value of using deep learning, as an all-in-one model of the visual cortex, to understand and decode natural vision.

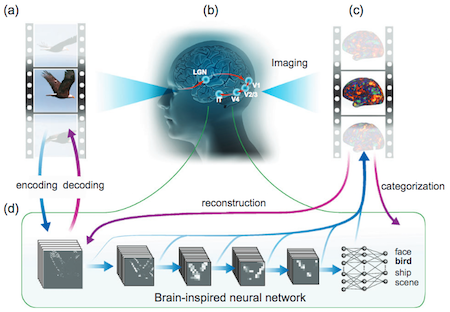

(Watching in near-real-time what the brain sees) Visual information generated by a video

(a) is processed in a cascade from the retina through the thalamus (LGN area) to several levels of the visual cortex

(b), detected from fMRI activity patterns

(c) and recorded. A powerful deep-learning technique

(d) then models this detected cortical visual processing. Called a convolutional neural network (CNN), this model transforms every video frame into multiple layers of features, ranging from orientations and colors (the first visual layer) to high-level object categories (face, bird, etc.) in semantic (meaning) space (the eighth layer). The trained CNN model can then be used to reverse this process, reconstructing the original videos even creating new videos that the CNN model had never watched.

(credit: Haiguang Wen et al./Cerebral Cortex)

In No, No, Not Rogov!, a 1958 story by Cordwainer Smith, an espionage machine is described that would actually let you see what another person saw by probing their brain:

He had then turned away from the reception of pure thought to the reception of visual and auditory images. Where the nerve-ends reached the brain itself, he had managed over the years to distinguish whole packets of microphenomena, and on some of these he had managed to get a fix.With infinitely delicate tuning he had succeeded one day in picking up in picking up the eyesight of their second chauffeur... and had managed to see through the other man's eyes as the other man, all unaware, washed their Zis limousine sixteen hundred meters away...

(Read more about Cordwainer Smith's espionage machine)

Via KurzweilAI.

Scroll down for more stories in the same category. (Story submitted 11/9/2017)

Follow this kind of news @Technovelgy.| Email | RSS | Blog It | Stumble | del.icio.us | Digg | Reddit |

Would

you like to contribute a story tip?

It's easy:

Get the URL of the story, and the related sf author, and add

it here.

Comment/Join discussion ( 0 )

Related News Stories - (" Medical ")

MIT Computerized Bionic Leg Is Part Of The User

'The leg was to function, in a way, as a servo-mechanism operated by Larrys brain, through the mediation of the electronic brain in the leg.' - Charles Recour, 1949.

Bone-Building Drug Evenity Approved

'Compounds devised by the biochemists for the rapid building of bone...' - Edmond Hamilton, 1932.

BrainBridge Concept Transplant Of Human Head Proposed

'Briquets head seemed to think that to find and attach a new body to her head was as easy as to fit and sew a new dress.' - Alexander Belaev (1925)

Natural Gait With Prosthetic Connected To Nervous System

'The leg was to function, in a way, as a servo-mechanism operated by Larrys brain...' - Charles Recour, 1949.

Technovelgy (that's tech-novel-gee!) is devoted to the creative science inventions and ideas of sf authors. Look for the Invention Category that interests you, the Glossary, the Invention Timeline, or see what's New.

Science Fiction

Timeline

1600-1899

1900-1939

1940's 1950's

1960's 1970's

1980's 1990's

2000's 2010's

Current News

Japan's AI Buddharoid Automonks

'...each of them is a neural mapping of the mind of a Tibetan monk who actually lived.'

The New Habitable Zones Include Asimov's Ribbon Worlds

'...there's a narrow belt where the climate is moderate.'

MIT Computerized Bionic Leg Is Part Of The User

'The leg was to function, in a way, as a servo-mechanism operated by Larrys brain, through the mediation of the electronic brain in the leg.'

California Governor Candidate Calls For Voting By Phone

'... every veephone on the continent would display, over and over, two propositions.'

Robots For Hire En Masse

'...small investors profited, too.'

China's Handheld Electromagnetic Gun

'Completely silent, accurate up to about twenty meters. No recoil...'

3D Printing A 12-Meter Boat Hull

'It makes drawings in the air...'

China Still Working On Rescue Robot That Eats People

Firefighter Rescue Robot Eats Humans - again!

Lawyer AIs Create Chaos In Our Legal System

'I want my lawyer program.'

Chinese Hospital Tries Vonnegut's 'Harrison Bergeron' Cosplay

'He wore spectacles with thick wavy lenses. The spectacles were intended to make him not only half

blind, but to give him whanging headaches besides.'

Robot Clerks Become A Reality In China

'The robot clerk in the waiting-room checked her number...'

Can One Robot Do Many Tasks?

'... with the Master-operator all you have to do is push one! A remarkable achievement!'

Atlas Robot Makes Uncomfortable Movements

'Not like me. A T-1000, advanced prototype. A mimetic poly-alloy. Liquid metal.'

Boring Company Drills Asimov's Single Vehicle Tunnels

'It was riddled with holes that were the mouths of tunnels.'

Humanoid Robots Tickle The Ivories

'The massive feet working the pedals, arms and hands flashing and glinting...'

A Remarkable Coincidence

'There is a philosophical problem of some difficulty here...'